ELI5 is a Python package which helps to debug machine learning classifiers and explain their predictions.

It provides support for the following machine learning frameworks and packages:

- scikit-learn. Currently ELI5 allows to explain weights and predictions of scikit-learn linear classifiers and regressors, print decision trees as text or as SVG, show feature importances and explain predictions of decision trees and tree-based ensembles. ELI5 understands text processing utilities from scikit-learn and can highlight text data accordingly. Pipeline and FeatureUnion are supported. It also allows to debug scikit-learn pipelines which contain HashingVectorizer, by undoing hashing.

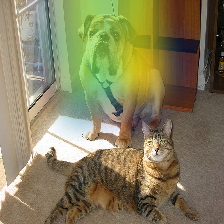

- Keras - explain predictions of image classifiers via Grad-CAM visualizations.

- xgboost - show feature importances and explain predictions of XGBClassifier, XGBRegressor and xgboost.Booster.

- LightGBM - show feature importances and explain predictions of LGBMClassifier and LGBMRegressor.

- CatBoost - show feature importances of CatBoostClassifier, CatBoostRegressor and catboost.CatBoost.

- lightning - explain weights and predictions of lightning classifiers and regressors.

- sklearn-crfsuite. ELI5 allows to check weights of sklearn_crfsuite.CRF models.

ELI5 also implements several algorithms for inspecting black-box models (see Inspecting Black-Box Estimators):

- TextExplainer allows to explain predictions of any text classifier using LIME algorithm (Ribeiro et al., 2016). There are utilities for using LIME with non-text data and arbitrary black-box classifiers as well, but this feature is currently experimental.

- Permutation importance method can be used to compute feature importances for black box estimators.

Explanation and formatting are separated; you can get text-based explanation

to display in console, HTML version embeddable in an IPython notebook

or web dashboards, a pandas.DataFrame object if you want to process

results further, or JSON version which allows to implement custom rendering

and formatting on a client.

License is MIT.

Check docs for more.