Dataflow kit ("DFK") is a Web Scraping framework for Gophers. It extracts data from web pages, following the specified CSS Selectors.

You can use it in many ways for data mining, data processing or archiving.

Web-scraping pipeline consists of 3 general components:

- Downloading an HTML web-page. (Fetch Service)

- Parsing an HTML page and retrieving data we're interested in (Parse Service)

- Encoding parsed data to CSV, MS Excel, JSON, JSON Lines or XML format.

fetch.d server is intended for html web pages content download. Depending on Fetcher type, web page content is downloaded using either Base Fetcher or Chrome fetcher.

Base fetcher uses standard golang http client to fetch pages as is. It works faster than Chrome fetcher. But Base fetcher cannot render dynamic javascript driven web pages.

Chrome fetcher is intended for rendering dynamic javascript based content. It sends requests to Chrome running in headless mode.

A fetched web page is passed to parse.d service.

parse.d is the service that extracts data from downloaded web page following the rules listed in configuration JSON file. Extracted data is returned in CSV, MS Excel, JSON or XML format.

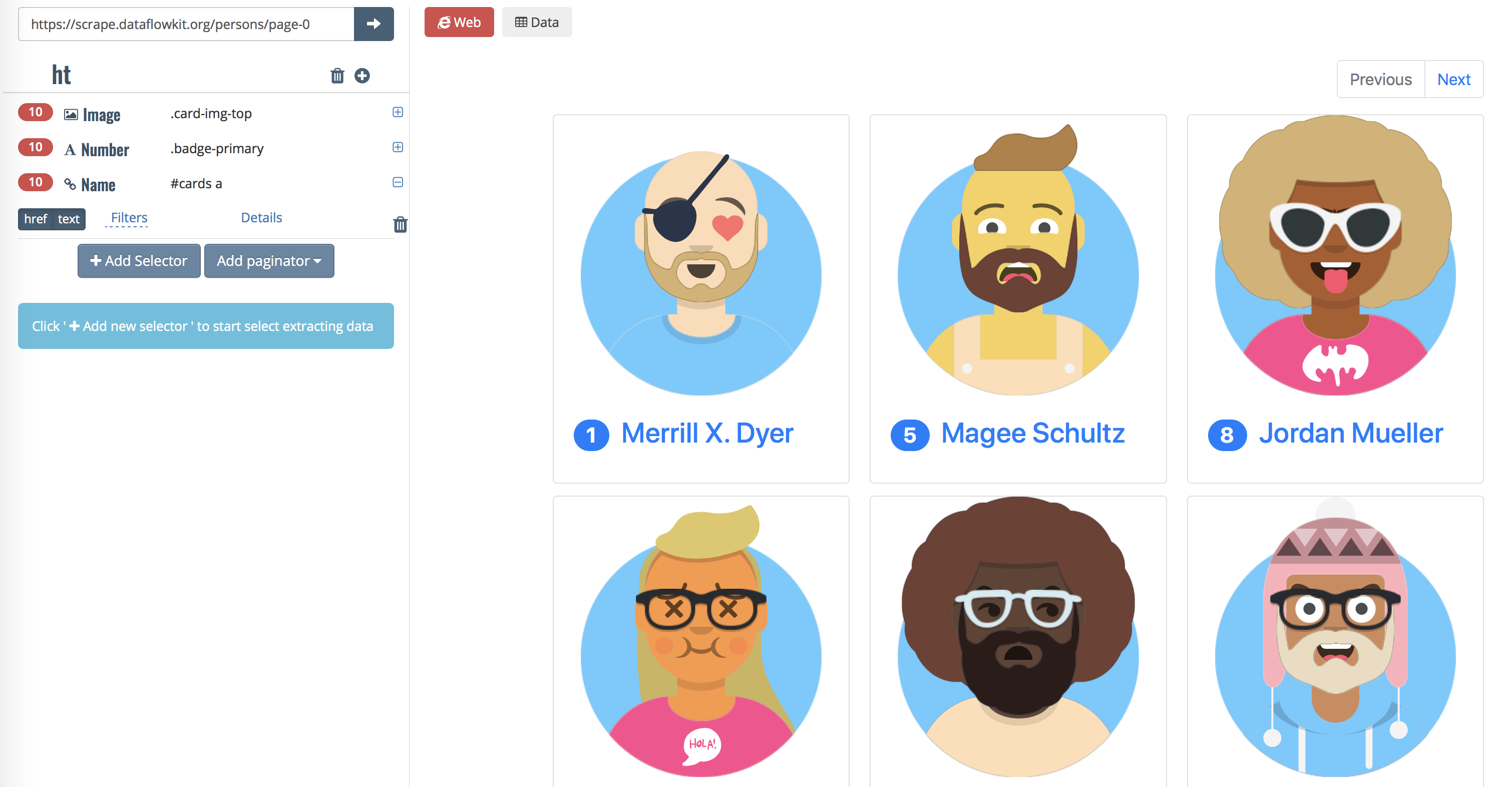

Note: Sometimes Parse service cannot extract data from some pages retrieved by default Base fetcher. Empty results may be returned while parsing Java Script generated pages. Parse service then attempts to force Chrome fetcher to render the same dynamic javascript driven content automatically. Have a look at https://scrape.dataflowkit.com/persons/page-0 which is a sample of JavaScript driven web page.

-

Scraping of JavaScript generated pages;

-

Data extraction from paginated websites;

-

Processing infinite scrolled pages.

-

Sсraping of websites behind login form;

-

Cookies and sessions handling;

-

Following links and detailed pages processing;

-

Managing delays between requests per domain;

-

Following robots.txt directives;

-

Saving intermediate data in Diskv or Mongodb. Storage interface is flexible enough to add more storage types easily;

-

Encode results to CSV, MS Excel, JSON(Lines), XML formats;

-

Dataflow kit is fast. It takes about 4-6 seconds to fetch and then parse 50 pages.

-

Dataflow kit is suitable to process quite large volumes of data. Our tests show the time needed to parse appr. 4 millions of pages is about 7 hours.

go get -u github.com/slotix/dataflowkit

-

Install Docker and Docker Compose

-

Start services.

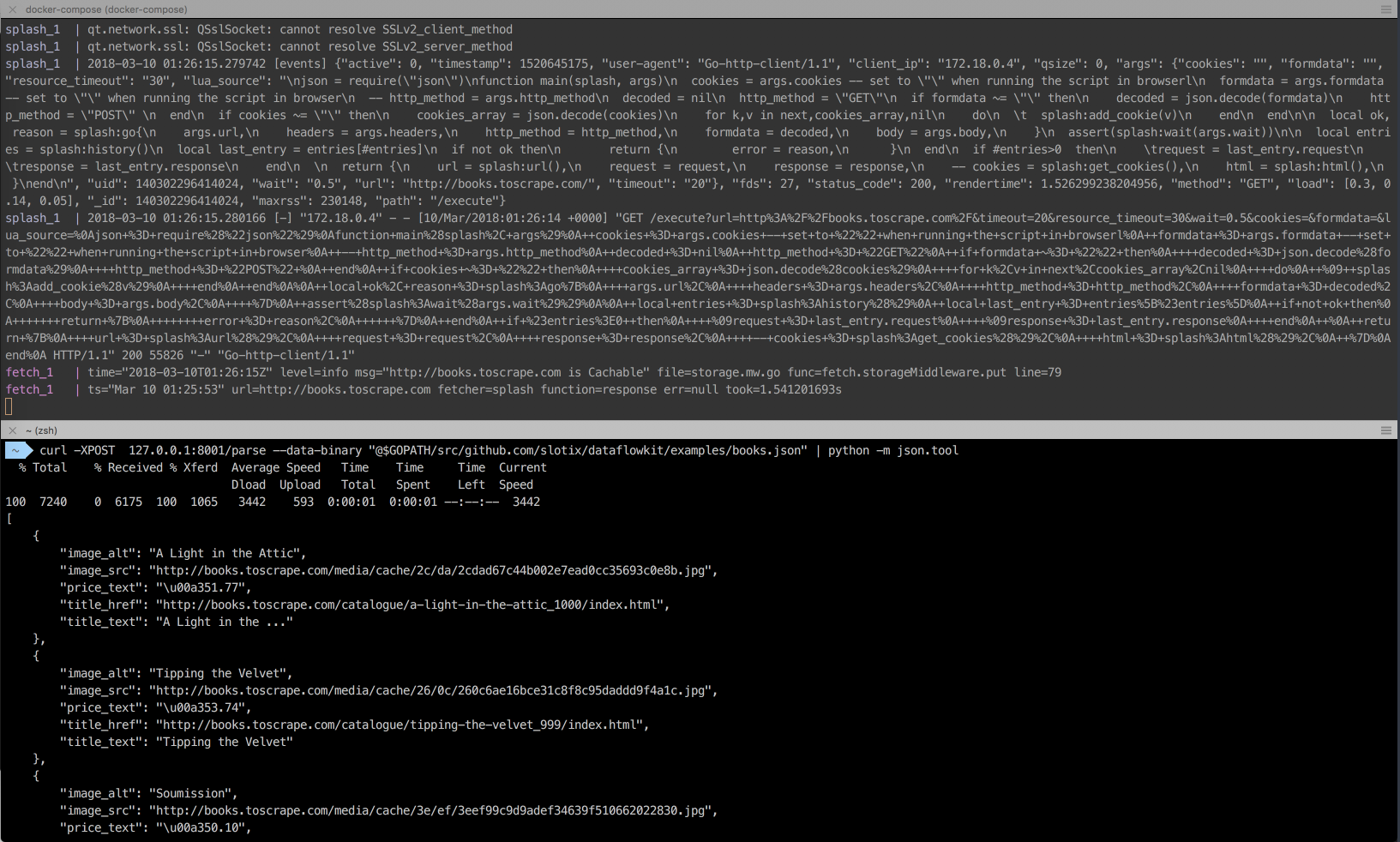

cd $GOPATH/src/github.com/slotix/dataflowkit && docker-compose up

This command fetches docker images automatically and starts services.

- Launch parsing in the second terminal window by sending POST request to parse daemon. Some json configuration files for testing are available in /examples folder.

curl -XPOST 127.0.0.1:8001/parse --data-binary "@$GOPATH/src/github.com/slotix/dataflowkit/examples/books.toscrape.com.json"

Here is the sample json configuration file:

{

"name":"collection",

"request":{

"url":"https://example.com"

},

"fields":[

{

"name":"Title",

"selector":".product-container a",

"extractor":{

"types":["text", "href"],

"filters":[

"trim",

"lowerCase"

],

"params":{

"includeIfEmpty":false

}

}

},

{

"name":"Image",

"selector":"#product-container img",

"extractor":{

"types":["alt","src","width","height"],

"filters":[

"trim",

"upperCase"

]

}

},

{

"name":"Buyinfo",

"selector":".buy-info",

"extractor":{

"types":["text"],

"params":{

"includeIfEmpty":false

}

}

}

],

"paginator":{

"selector":".next",

"attr":"href",

"maxPages":3

},

"format":"json",

"fetcherType":"chrome",

"paginateResults":false

}

Read more information about scraper configuration JSON files at our GoDoc reference

Extractors and filters are described at https://godoc.org/github.com/slotix/dataflowkit/extract

- To stop services just press Ctrl+C and run

cd $GOPATH/src/github.com/slotix/dataflowkit && docker-compose down --remove-orphans --volumes

Click on image to see CLI in action.

- Start Chrome docker container

docker run --init -it --rm -d --name chrome --shm-size=1024m -p=127.0.0.1:9222:9222 --cap-add=SYS_ADMIN \

yukinying/chrome-headless-browser

Headless Chrome is used for fetching web pages to feed a Dataflow kit parser.

- Build and run fetch.d service

cd $GOPATH/src/github.com/slotix/dataflowkit/cmd/fetch.d && go build && ./fetch.d

- In new terminal window build and run parse.d service

cd $GOPATH/src/github.com/slotix/dataflowkit/cmd/parse.d && go build && ./parse.d

- Launch parsing. See step 3. from the previous section.

docker-compose -f test-docker-compose.yml up -d./test.sh- To stop services just run

docker-compose -f test-docker-compose.yml down

Try https://dataflowkit.com/dfk Front-end with Point-and-click interface to Dataflow kit services. It generates JSON config file and sends POST request to DFK Parser

Click on image to see Dataflow kit in action.

This is Free Software, released under the BSD 3-Clause License.

You are welcome to contribute to our project.

- Please submit your issues

- Fork the project