Deep Learning for Multi-Label Text Classification

This repository is my research project, and it is also a study of TensorFlow, Deep Learning(Fasttext, CNN, LSTM, RCNN, etc.).

The main objective of the project is to solve the multi-label text classification problem based on Deep Neural Networks. Thus, the format of the data label is like [0, 1, 0, ..., 1, 1] according to the characteristics of such problem.

Requirements

- Python 3.6

- Tensorflow 1.8 +

- Numpy

- Gensim

Innovation

Data part

- Make the data support Chinese and English.(Which use

jiebaseems easy) - Can use your own pre-trained word vectors.(Which use

gensimseems easy) - Add embedding visualization based on the tensorboard.

Model part

- Add the correct L2 loss calculation operation.

- Add gradients clip operation to prevent gradient explosion.

- Add learning rate decay with exponential decay.

- Add a new Highway Layer (Which is useful according to the model performance).

- Add Batch Normalization Layer.

Code part

- Can choose to train the model directly or restore the model from checkpoint in

train.py. - Can predict the labels via threshold and topK in

train.pyandtest.py. - Can calculate the evaluation metrics --- AUC & AUPRC.

- Add

test.py, the model test code, it can show the predict value of each labels of the data in Testset when creating the final prediction file. - Add other useful data preprocess functions in

data_helpers.py. - Use

loggingfor helping recording the whole info (including parameters display, model training info, etc.). - Provide the ability to save the best n checkpoints in

checkmate.py, whereas thetf.train.Savercan only save the last n checkpoints.

Data

See data format in data folder which including the data sample files.

Text Segment

You can use jieba package if you are going to deal with the chinese text data.

Data Format

This repository can be used in other datasets(text classification) by two ways:

- Modify your datasets into the same format of the sample.

- Modify the data preprocess code in

data_helpers.py.

Anyway, it should depends on what your data and task are.

Pre-trained Word Vectors

You can pre-training your word vectors(based on your corpus) in many ways:

- Use

gensimpackage to pre-train data. - Use

glovetools to pre-train data. - Even can use a fasttext network to pre-train data.

Network Structure

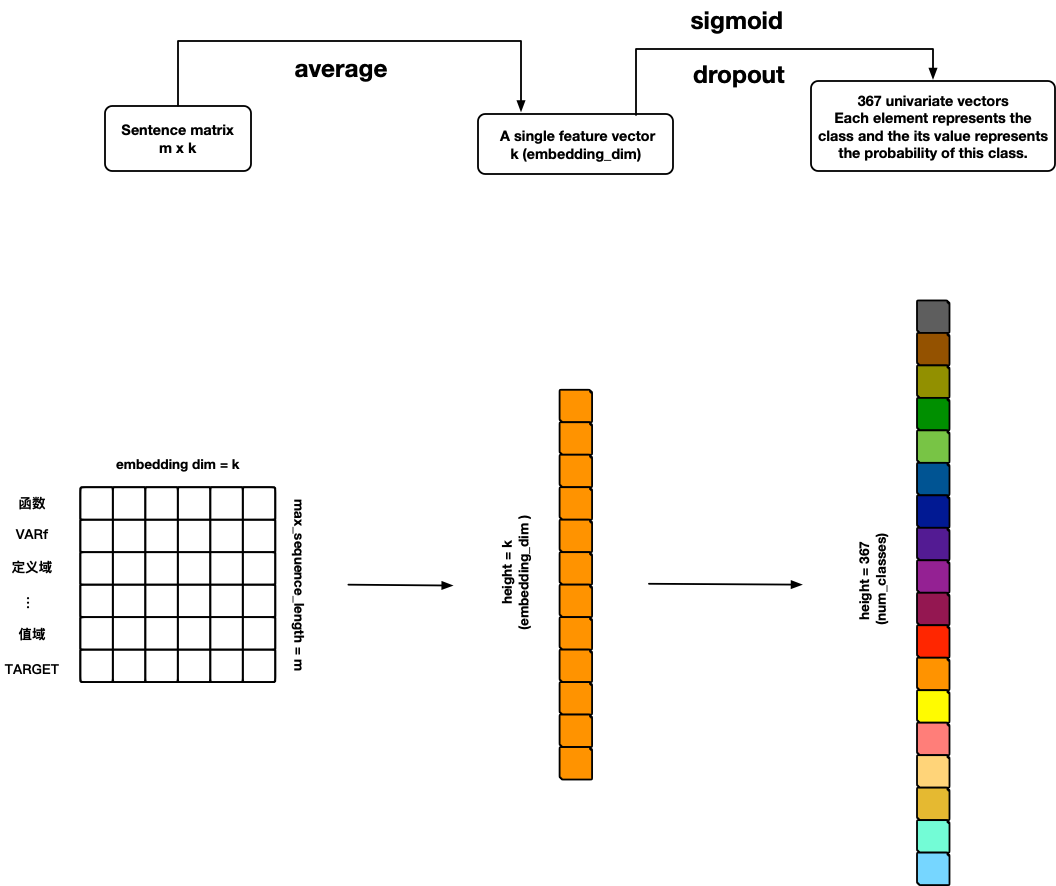

FastText

References:

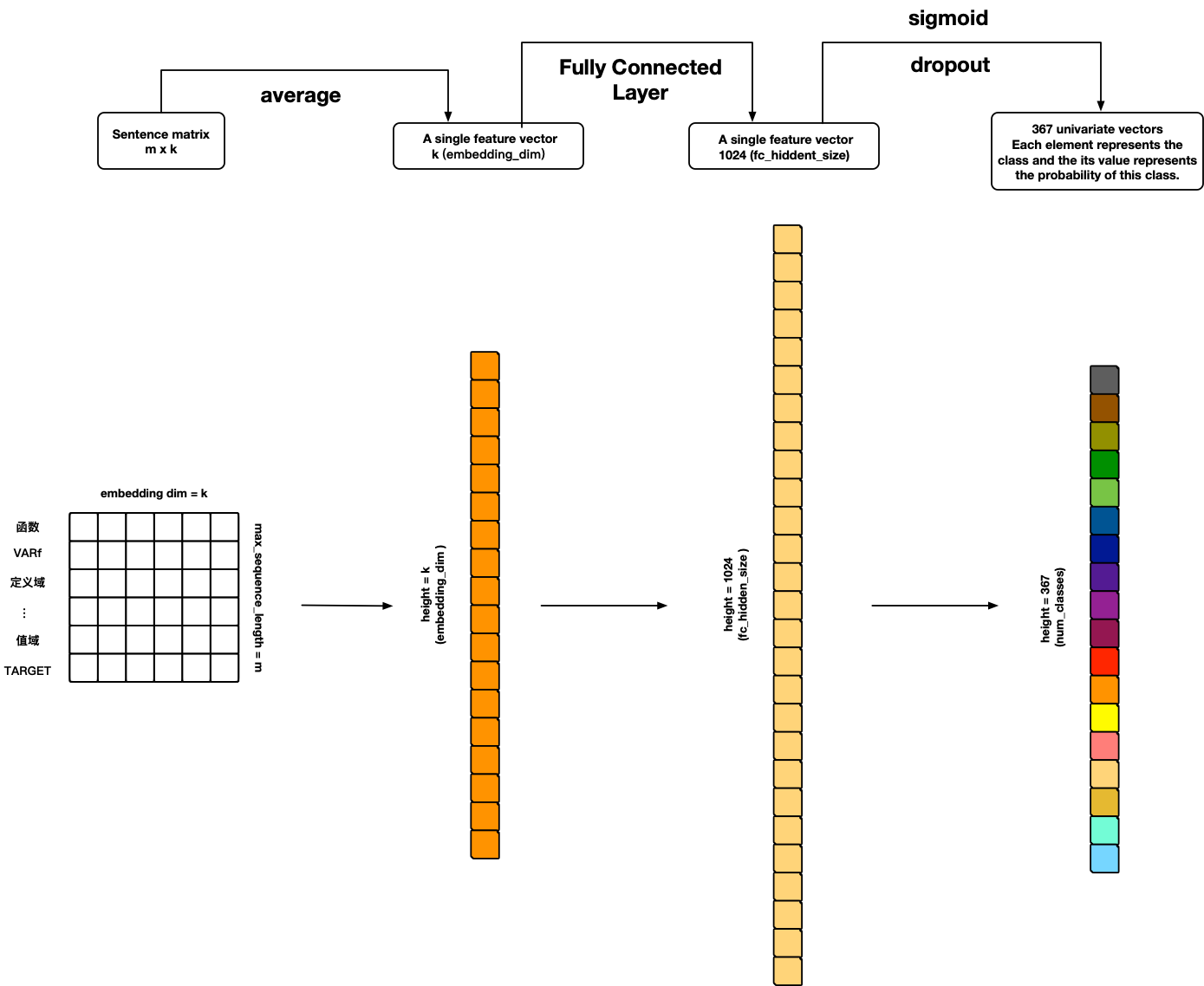

TextANN

References:

- Personal ideas 🙃

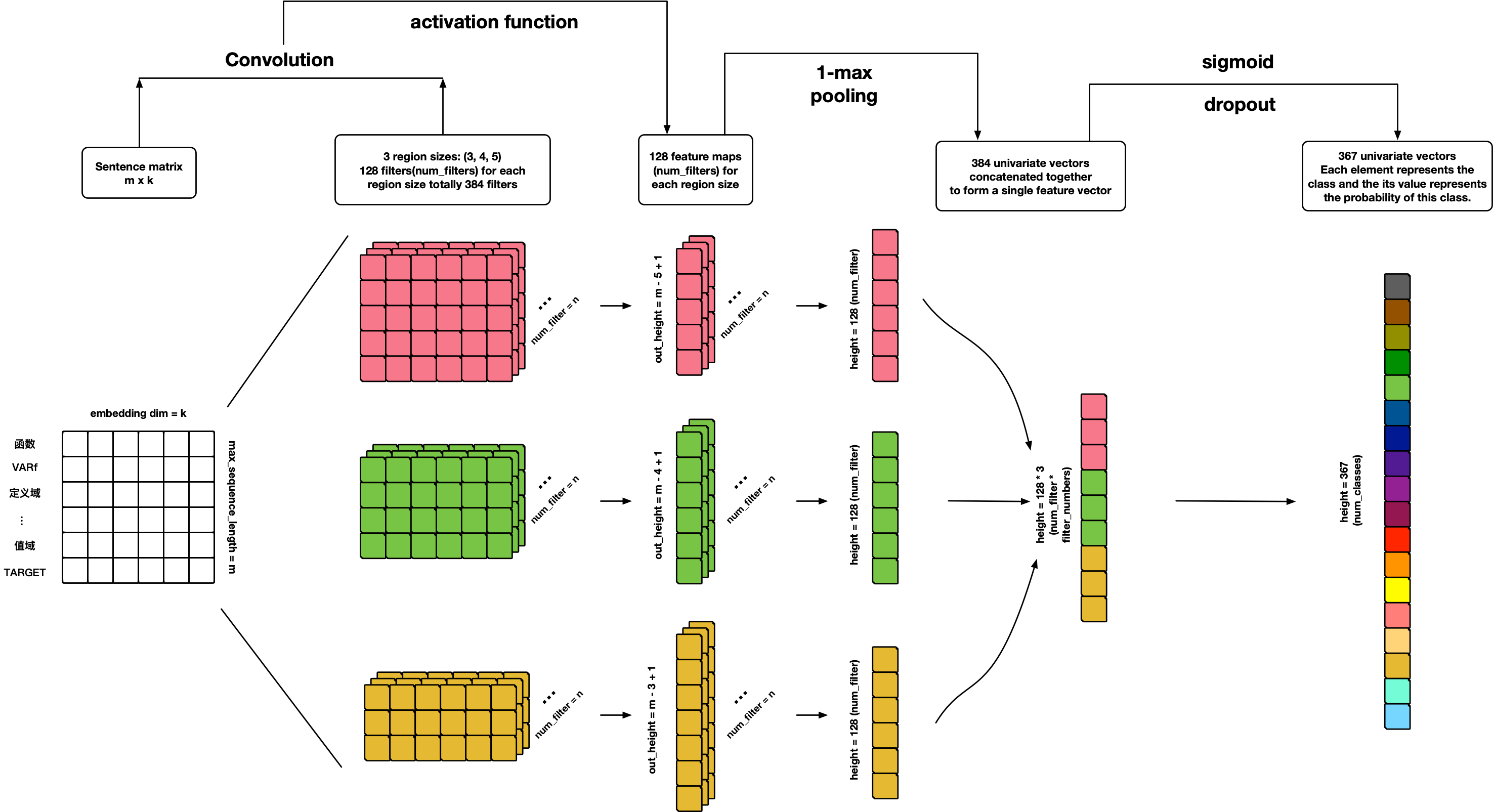

TextCNN

References:

- Convolutional Neural Networks for Sentence Classification

- A Sensitivity Analysis of (and Practitioners' Guide to) Convolutional Neural Networks for Sentence Classification

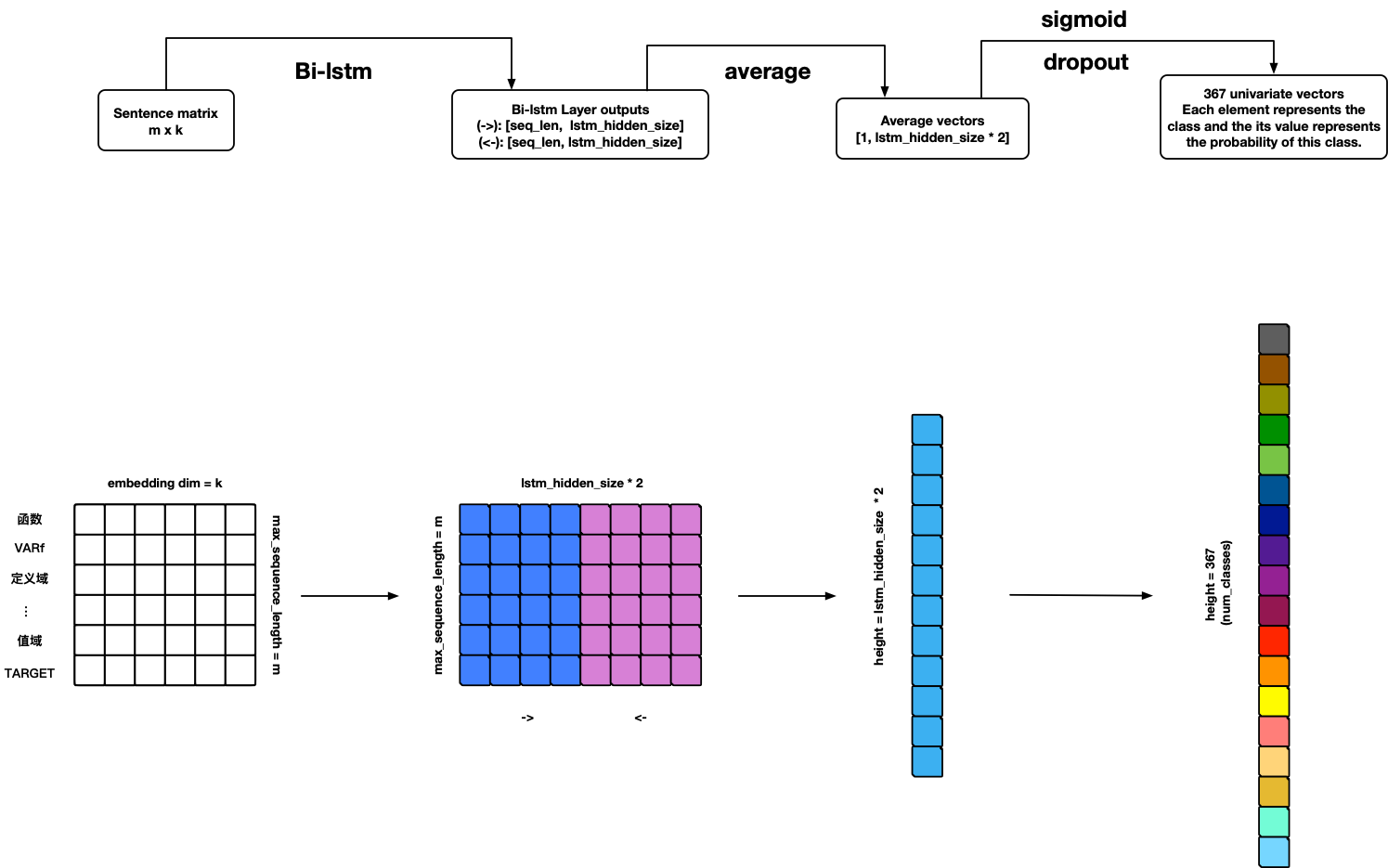

TextRNN

Warning: Model can use but not finished yet 🤪!

TODO

- Add BN-LSTM cell unit.

- Add attention.

References:

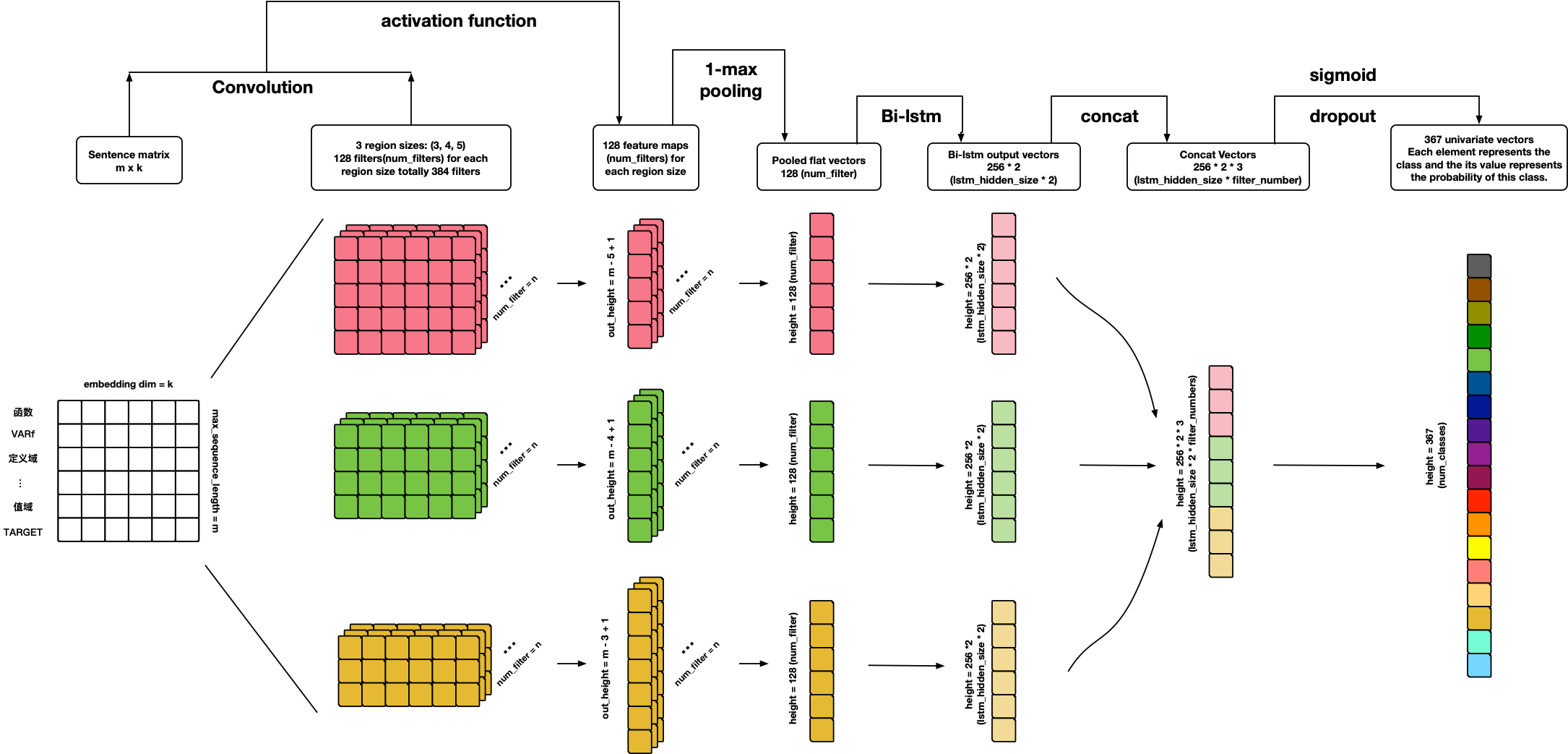

TextCRNN

References:

- Personal ideas 🙃

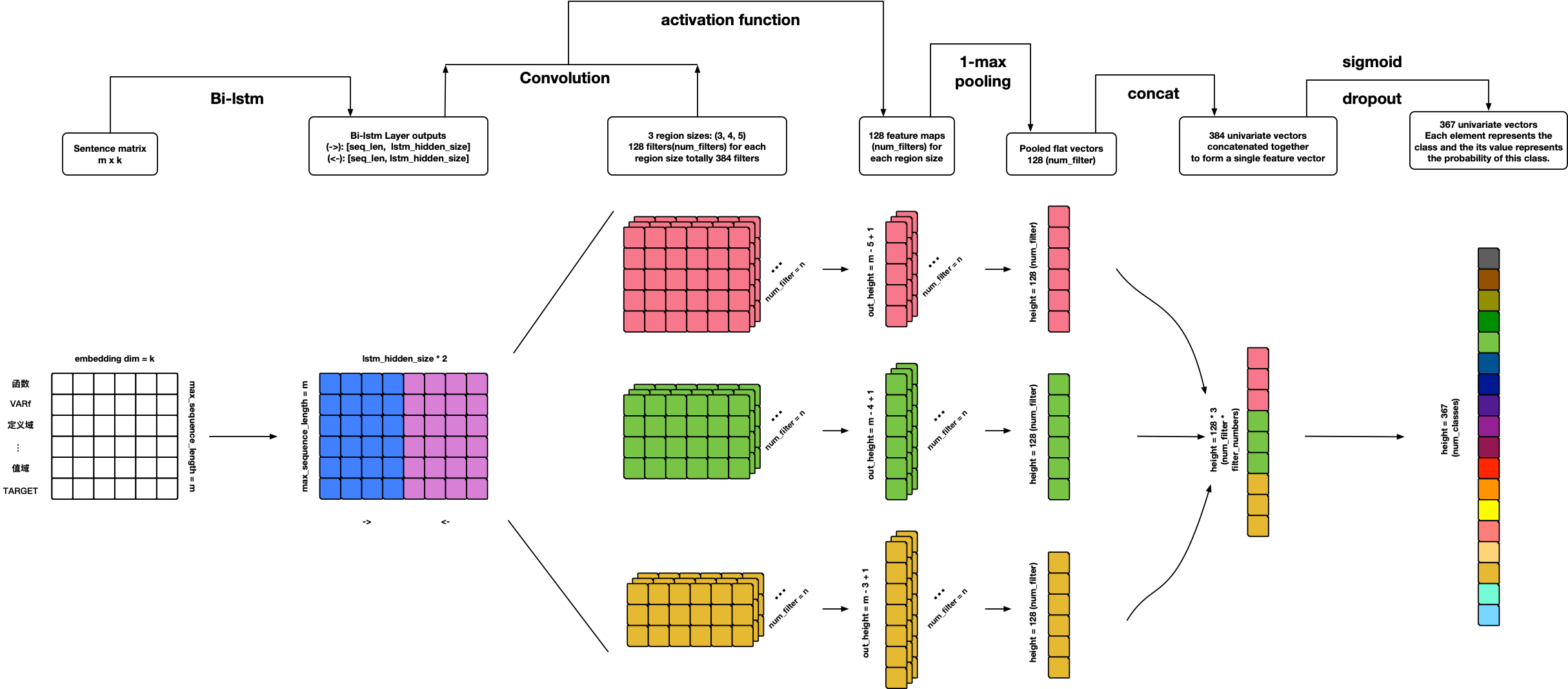

TextRCNN

References:

- Personal ideas 🙃

TextHAN

References:

TextSANN

Warning: Model can use but not finished yet 🤪!

TODO

- Add attention penalization loss.

- Add visualization.

References:

About Me

黄威,Randolph

SCU SE Bachelor; USTC CS Master

Email: [email protected]

My Blog: randolph.pro

LinkedIn: randolph's linkedin