Code to reproduce the experiments in Practical Probabilistic Model-based Deep Reinforcement Learning by Integrating Dropout Uncertainty and Trajectory Sampling. This paper is currently submitted to IEEE Transactions on Neural Networks and Learning Systems (TNNLS) for peer review.

Please feel free to contact us regarding to the details of implementing DPETS. (Wenjun Huang: [email protected] Yunduan Cui: [email protected])

- Install MuJoCo 1.31 and copy your license key to

~/.mujoco/mjkey.txt. - Install PyTorch, we recommend CUDA 11.6 and Pytorch 1.13.1

- Other dependencies can be installed with

pip install -r requirements.txt.

Experiment for a specific configuration can be run using:

python main.py --config cartpoleThe specific configuration file is located in the configs directory and the default configuration file can be located in the root directory default_config.json was found, which allows you to modify the experimental parameters.

We use Tensorboard to record experimental data, you can view runs with:

tensorboard --logdir ./runs/ --port=6006 --host=0.0.0.0@misc{huang2023practical,

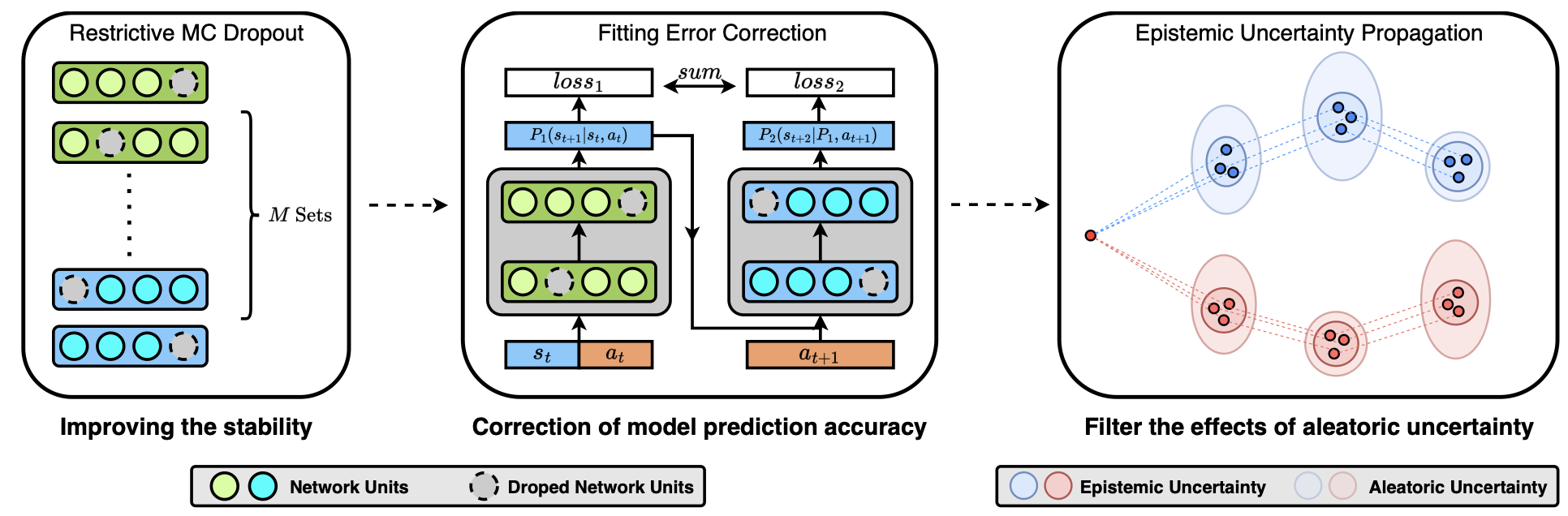

title={Practical Probabilistic Model-based Deep Reinforcement Learning by Integrating Dropout Uncertainty and Trajectory Sampling},

author={Wenjun Huang and Yunduan Cui and Huiyun Li and Xinyu Wu},

year={2023},

eprint={2309.11089},

archivePrefix={arXiv},

primaryClass={eess.SY}

}