In this project, we provide the code for reproducing the experiments in our paper. SPBERT is a BERT-based language model pre-trained on massive SPARQL query logs. SPBERT can learn general-purpose representations in both natural language and SPARQL query language and make the most of the sequential order of words that are crucial for structured language like SPARQL.

To reproduce the experiment of our model, please install the requirements.txt according to the following instructions:

- transformers==4.5.1

- pytorch==1.8.1

- python 3.7.10

$ pip install -r requirements.txtWe release three versions of pre-trained weights. Pre-training was based on the original BERT code provided by Google, and training details are described in our paper. You can download all versions from the table below:

| Pre-training objective | Model | Steps | Link |

|---|---|---|---|

| MLM | SPBERT (scratch) | 200k | 🤗 razent/spbert-mlm-zero |

| MLM | SPBERT (BERT-initialized) | 200k | 🤗 razent/spbert-mlm-base |

| MLM+WSO | SPBERT (BERT-initialized) | 200k | 🤗 razent/spbert-mlm-wso-base |

All evaluation datasets can download here.

To fine-tune models:

python run.py \

--do_train \

--do_eval \

--model_type bert \

--model_architecture bert2bert \

--encoder_model_name_or_path bert-base-cased \

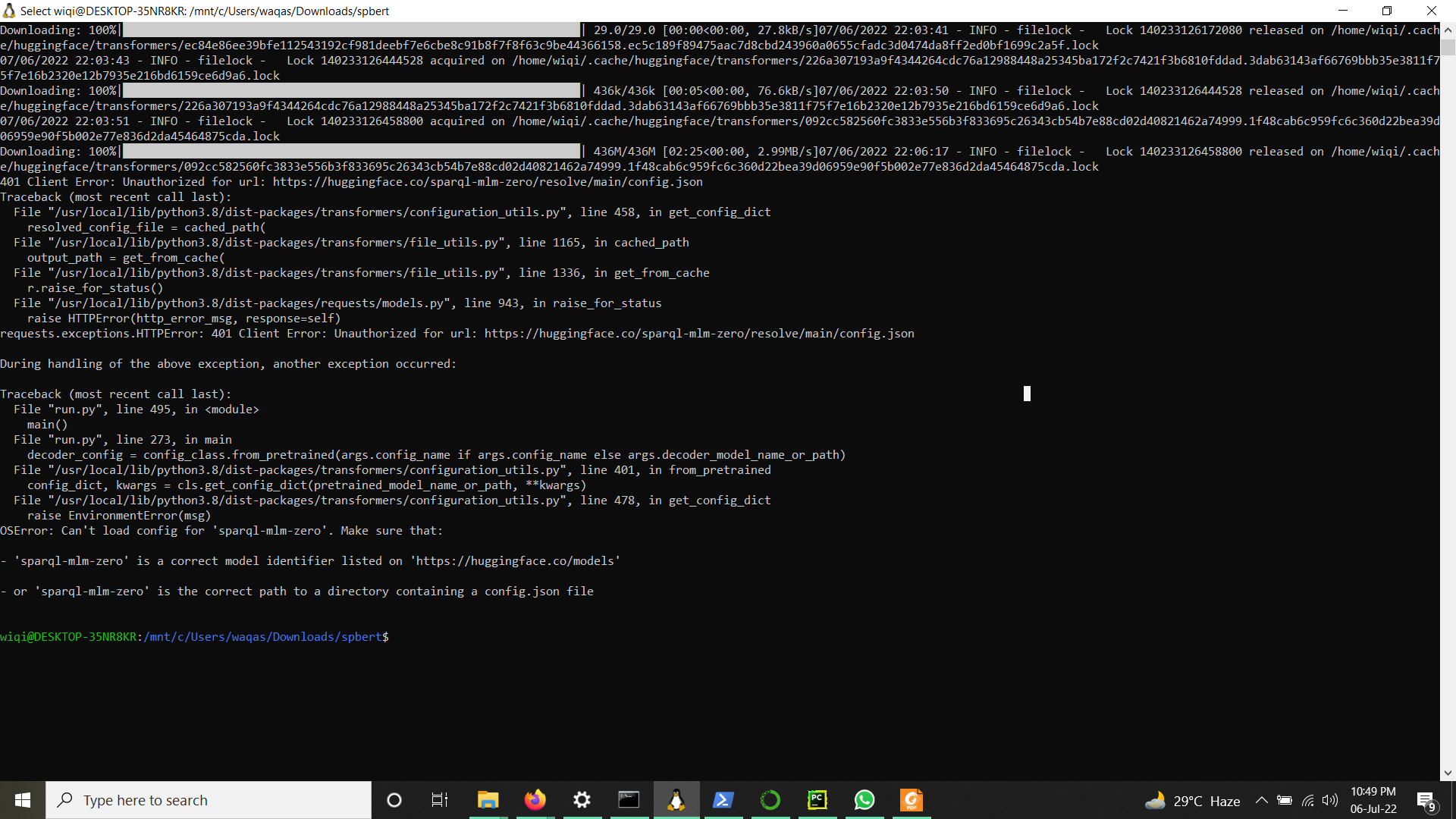

--decoder_model_name_or_path sparql-mlm-zero \

--source en \

--target sparql \

--train_filename ./LCQUAD/train \

--dev_filename ./LCQUAD/dev \

--output_dir ./ \

--max_source_length 64 \

--weight_decay 0.01 \

--max_target_length 128 \

--beam_size 10 \

--train_batch_size 32 \

--eval_batch_size 32 \

--learning_rate 5e-5 \

--save_inverval 10 \

--num_train_epochs 150To evaluate models:

python run.py \

--do_test \

--model_type bert \

--model_architecture bert2bert \

--encoder_model_name_or_path bert-base-cased \

--decoder_model_name_or_path sparql-mlm-zero \

--source en \

--target sparql \

--load_model_path ./checkpoint-best-bleu/pytorch_model.bin \

--dev_filename ./LCQUAD/dev \

--test_filename ./LCQUAD/test \

--output_dir ./ \

--max_source_length 64 \

--max_target_length 128 \

--beam_size 10 \

--eval_batch_size 32 \Email: [email protected] - Hieu Tran

@inproceedings{Tran2021SPBERTAE,

title={SPBERT: An Efficient Pre-training BERT on SPARQL Queries for Question Answering over Knowledge Graphs},

author={Hieu Tran and Long Phan and James T. Anibal and Binh Thanh Nguyen and Truong-Son Nguyen},

booktitle={ICONIP},

year={2021}

}