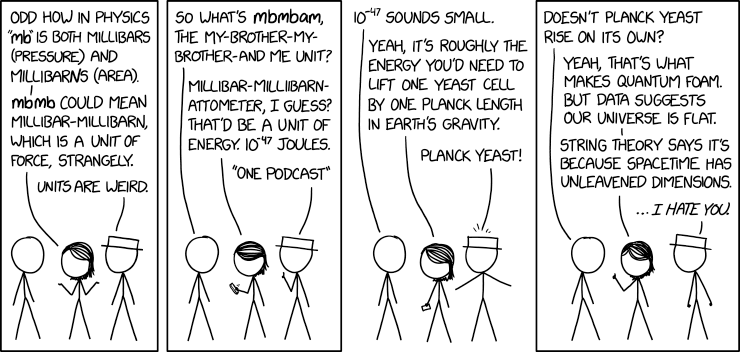

© Randall Monroe, 2020

This tool interprets phrases as if they were a sequence of units, slicing the phrase into unit symbols that cancel out into the smallest unit that covers the most of the phrase. It thereby implements XKCD 2312. The engine inserts SI prefixes where they make the resulting unit smaller. It uses unit symbols, common names, and conversions to SI provided by Wikipedia, specifically this Lua table that is generated from that page.

Live implementation at https://units.lam.io

The engine is written in Haskell and is made up of two parts:

- A dynamic-programming algorithm that finds the unit that bridges the current string position to a later one with the smallest resulting SI unit, and

- A heuristic knapsack-problem solver to convert the final SI unit back to a more familiar worded form (e.g. m/s → speed).

Since the DP and knapsack solvers aren't optimal, the results aren't always strictly minimal, but they're usually pretty good and small.

Further details on implementation can be found on my blog.

This is a Python-Haskell-React project. Yes, it's time to get funky with package managers.

- Install and build using:

$ npm i

pip install -r < requirements.txt

cabal build

- This repo contains the data I used at the time of creation, so it's possible to run the server directly with

cabal run, where it starts listening on port 8000 by [HappStack's] default. - However, if you want to update the data from Wikipedia and/or tweak the conversion:

-

prep/dat.luais the extracted set of Lua unit tables at Wikipedia's Module:Convert/data, withall_unitsexposed.prep/convert.luajust uses a tweaked version of RXI's json.lua to ignore the weird mixed tables and yank the data into JSON likecd prep; lua convert.lua > wikiunits.json -

This data contains aliases of unit symbols that need to be flattened (e.g.

U.S.gal->USgal) and ratios whose constituent units need to be resolved (e.g.L/100 km). Further, some symbols that are referenced but not specified because they are prefixed. These include:cm2, km2, um, mm, km, cm3, km3, ml, dL, ug, mg, kg, Mg, kPa, kN, kJ, MJI added these by hand to

wikiunits.json.prep/convert.pyconverts the data to one the Haskell engine can use viacd prep; python convert.py wikiunits.json utypes.json > ../hs-data/u2si.json. -

There is a minified list of

utypes calledhs-data/lim_utypesfor use by the knapsack solver that removes redundant options (likevolume per areavs.length) and unitless utypes (likegradient) to help it solve faster.

-

The Wikipedia data is also not the most complete. Notably it's missing candela, and to my eye it's also sparse on electrical units (like Volt, Farad, Henry, etc.). I'm looking for better datasets (see #1) that should improve the quality of the solver's results.