Comments (7)

@psychemedia

I had implemented Jupyter Notebook support at sqlitebite 0.16.0

For example, https://github.com/aymericdamien/TensorFlow-Examples/blob/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb will converted into the following:

$ sqlitebiter url "https://raw.githubusercontent.com/aymericdamien/TensorFlow-Examples/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb"

[INFO] sqlitebiter url: convert 'raw.githubusercontent.com/aymericdamien/TensorFlow-Examples/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb' to 'cells_source' table

[INFO] sqlitebiter url: convert 'raw.githubusercontent.com/aymericdamien/TensorFlow-Examples/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb' to 'cells_outputs' table

[INFO] sqlitebiter url: convert 'raw.githubusercontent.com/aymericdamien/TensorFlow-Examples/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb' to 'cells_outputs_kv' table

[INFO] sqlitebiter url: convert 'raw.githubusercontent.com/aymericdamien/TensorFlow-Examples/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb' to 'cells_kv' table

[INFO] sqlitebiter url: convert 'raw.githubusercontent.com/aymericdamien/TensorFlow-Examples/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb' to 'metadata_kernelspec' table

[INFO] sqlitebiter url: convert 'raw.githubusercontent.com/aymericdamien/TensorFlow-Examples/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb' to 'metadata_language_info' table

[INFO] sqlitebiter url: convert 'raw.githubusercontent.com/aymericdamien/TensorFlow-Examples/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb' to 'metadata_kv' table

[INFO] sqlitebiter url: convert 'raw.githubusercontent.com/aymericdamien/TensorFlow-Examples/master/notebooks/3_NeuralNetworks/recurrent_network.ipynb' to 'kv' table

[INFO] sqlitebiter url: number of created tables: 8

[INFO] sqlitebiter url: database path: out.sqlite

schema

sqlite> .schema

CREATE TABLE IF NOT EXISTS 'cells_source' (cell_id INTEGER NOT NULL, line_no INTEGER NOT NULL, text TEXT);

CREATE TABLE IF NOT EXISTS 'cells_outputs' (cell_id INTEGER NOT NULL, type TEXT NOT NULL, line_no INTEGER, data BLOB);

CREATE TABLE IF NOT EXISTS 'cells_outputs_kv' (cell_id INTEGER NOT NULL, key TEXT NOT NULL, value TEXT);

CREATE TABLE IF NOT EXISTS 'cells_kv' (cell_id INTEGER NOT NULL, key TEXT NOT NULL, value TEXT);

CREATE TABLE IF NOT EXISTS 'metadata_kernelspec' (key TEXT NOT NULL, value TEXT NOT NULL);

CREATE TABLE IF NOT EXISTS 'metadata_language_info' (key TEXT NOT NULL, value TEXT NOT NULL);

CREATE TABLE IF NOT EXISTS 'metadata_kv' (key TEXT NOT NULL, value TEXT NOT NULL);

CREATE TABLE IF NOT EXISTS 'kv' (key TEXT NOT NULL, value TEXT);

tables

cells_source

| cell_id | line_no | text |

|---|---|---|

| 0 | 0 | # Recurrent Neural Network Example |

| 0 | 1 | |

| 0 | 2 | Build a recurrent neural network (LSTM) with TensorFlow. |

| 0 | 3 | |

| 0 | 4 | - Author: Aymeric Damien |

| 0 | 5 | - Project: https://github.com/aymericdamien/TensorFlow-Examples/ |

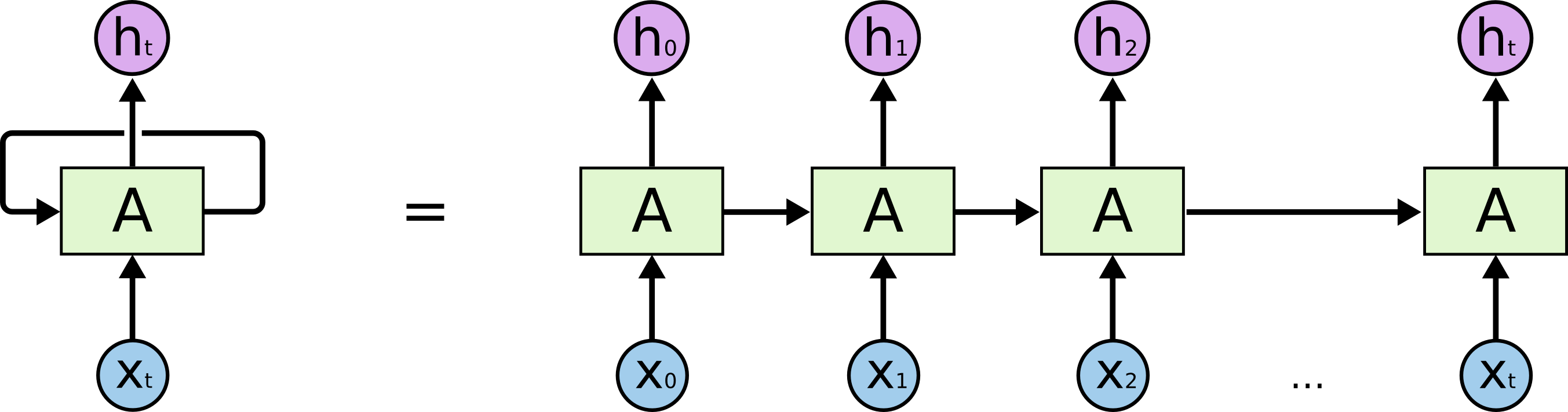

| 1 | 0 | ## RNN Overview |

| 1 | 1 | |

| 1 | 2 |  |

| 1 | 3 | |

| 1 | 4 | References: |

| 1 | 5 | - Long Short Term Memory, Sepp Hochreiter & Jurgen Schmidhuber, Neural Computation 9(8): 1735-1780, 1997. |

| 1 | 6 | |

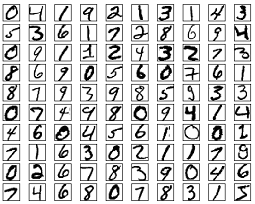

| 1 | 7 | ## MNIST Dataset Overview |

| 1 | 8 | |

| 1 | 9 | This example is using MNIST handwritten digits. The dataset contains 60,000 examples for training and 10,000 examples for testing. The digits have been size-normalized and centered in a fixed-size image (28x28 pixels) with values from 0 to 1. For simplicity, each image has been flattened and converted to a 1-D numpy array of 784 features (28*28). |

| 1 | 10 | |

| 1 | 11 |  |

| 1 | 12 | |

| 1 | 13 | To classify images using a recurrent neural network, we consider every image row as a sequence of pixels. Because MNIST image shape is 28*28px, we will then handle 28 sequences of 28 timesteps for every sample. |

| 1 | 14 | |

| 1 | 15 | More info: http://yann.lecun.com/exdb/mnist/ |

| 2 | 0 | from future import print_function |

| 2 | 1 | |

| 2 | 2 | import tensorflow as tf |

| 2 | 3 | from tensorflow.contrib import rnn |

| 2 | 4 | |

| 2 | 5 | # Import MNIST data |

| 2 | 6 | from tensorflow.examples.tutorials.mnist import input_data |

| 2 | 7 | mnist = input_data.read_data_sets("/tmp/data/", one_hot=True) |

| 3 | 0 | # Training Parameters |

| 3 | 1 | learning_rate = 0.001 |

| 3 | 2 | training_steps = 10000 |

| 3 | 3 | batch_size = 128 |

| 3 | 4 | display_step = 200 |

| 3 | 5 | |

| 3 | 6 | # Network Parameters |

| 3 | 7 | num_input = 28 # MNIST data input (img shape: 28*28) |

| 3 | 8 | timesteps = 28 # timesteps |

| 3 | 9 | num_hidden = 128 # hidden layer num of features |

| 3 | 10 | num_classes = 10 # MNIST total classes (0-9 digits) |

| 3 | 11 | |

| 3 | 12 | # tf Graph input |

| 3 | 13 | X = tf.placeholder("float", [None, timesteps, num_input]) |

| 3 | 14 | Y = tf.placeholder("float", [None, num_classes]) |

| 4 | 0 | # Define weights |

| 4 | 1 | weights = { |

| 4 | 2 | 'out': tf.Variable(tf.random_normal([num_hidden, num_classes])) |

| 4 | 3 | } |

| 4 | 4 | biases = { |

| 4 | 5 | 'out': tf.Variable(tf.random_normal([num_classes])) |

| 4 | 6 | } |

| 5 | 0 | def RNN(x, weights, biases): |

| 5 | 1 | |

| 5 | 2 | # Prepare data shape to match rnn function requirements |

| 5 | 3 | # Current data input shape: (batch_size, timesteps, n_input) |

| 5 | 4 | # Required shape: 'timesteps' tensors list of shape (batch_size, n_input) |

| 5 | 5 | |

| 5 | 6 | # Unstack to get a list of 'timesteps' tensors of shape (batch_size, n_input) |

| 5 | 7 | x = tf.unstack(x, timesteps, 1) |

| 5 | 8 | |

| 5 | 9 | # Define a lstm cell with tensorflow |

| 5 | 10 | lstm_cell = rnn.BasicLSTMCell(num_hidden, forget_bias=1.0) |

| 5 | 11 | |

| 5 | 12 | # Get lstm cell output |

| 5 | 13 | outputs, states = rnn.static_rnn(lstm_cell, x, dtype=tf.float32) |

| 5 | 14 | |

| 5 | 15 | # Linear activation, using rnn inner loop last output |

| 5 | 16 | return tf.matmul(outputs[-1], weights['out']) + biases['out'] |

| 6 | 0 | logits = RNN(X, weights, biases) |

| 6 | 1 | prediction = tf.nn.softmax(logits) |

| 6 | 2 | |

| 6 | 3 | # Define loss and optimizer |

| 6 | 4 | loss_op = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits( |

| 6 | 5 | logits=logits, labels=Y)) |

| 6 | 6 | optimizer = tf.train.GradientDescentOptimizer(learning_rate=learning_rate) |

| 6 | 7 | train_op = optimizer.minimize(loss_op) |

| 6 | 8 | |

| 6 | 9 | # Evaluate model (with test logits, for dropout to be disabled) |

| 6 | 10 | correct_pred = tf.equal(tf.argmax(prediction, 1), tf.argmax(Y, 1)) |

| 6 | 11 | accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32)) |

| 6 | 12 | |

| 6 | 13 | # Initialize the variables (i.e. assign their default value) |

| 6 | 14 | init = tf.global_variables_initializer() |

| 7 | 0 | # Start training |

| 7 | 1 | with tf.Session() as sess: |

| 7 | 2 | |

| 7 | 3 | # Run the initializer |

| 7 | 4 | sess.run(init) |

| 7 | 5 | |

| 7 | 6 | for step in range(1, training_steps+1): |

| 7 | 7 | batch_x, batch_y = mnist.train.next_batch(batch_size) |

| 7 | 8 | # Reshape data to get 28 seq of 28 elements |

| 7 | 9 | batch_x = batch_x.reshape((batch_size, timesteps, num_input)) |

| 7 | 10 | # Run optimization op (backprop) |

| 7 | 11 | sess.run(train_op, feed_dict={X: batch_x, Y: batch_y}) |

| 7 | 12 | if step % display_step == 0 or step == 1: |

| 7 | 13 | # Calculate batch loss and accuracy |

| 7 | 14 | loss, acc = sess.run([loss_op, accuracy], feed_dict={X: batch_x, |

| 7 | 15 | Y: batch_y}) |

| 7 | 16 | print("Step " + str(step) + ", Minibatch Loss= " + \ |

| 7 | 17 | "{:.4f}".format(loss) + ", Training Accuracy= " + \ |

| 7 | 18 | "{:.3f}".format(acc)) |

| 7 | 19 | |

| 7 | 20 | print("Optimization Finished!") |

| 7 | 21 | |

| 7 | 22 | # Calculate accuracy for 128 mnist test images |

| 7 | 23 | test_len = 128 |

| 7 | 24 | test_data = mnist.test.images[:test_len].reshape((-1, timesteps, num_input)) |

| 7 | 25 | test_label = mnist.test.labels[:test_len] |

| 7 | 26 | print("Testing Accuracy:", \ |

| 7 | 27 | sess.run(accuracy, feed_dict={X: test_data, Y: test_label})) |

cells_outputs

| cell_id | type | line_no | data |

|---|---|---|---|

| 2 | text | 0 | Extracting /tmp/data/train-images-idx3-ubyte.gz |

| 2 | text | 1 | Extracting /tmp/data/train-labels-idx1-ubyte.gz |

| 2 | text | 2 | Extracting /tmp/data/t10k-images-idx3-ubyte.gz |

| 2 | text | 3 | Extracting /tmp/data/t10k-labels-idx1-ubyte.gz |

| 7 | text | 0 | Step 1, Minibatch Loss= 2.6268, Training Accuracy= 0.102 |

| 7 | text | 1 | Step 200, Minibatch Loss= 2.0722, Training Accuracy= 0.328 |

| 7 | text | 2 | Step 400, Minibatch Loss= 1.9181, Training Accuracy= 0.336 |

| 7 | text | 3 | Step 600, Minibatch Loss= 1.8858, Training Accuracy= 0.336 |

| 7 | text | 4 | Step 800, Minibatch Loss= 1.7022, Training Accuracy= 0.422 |

| 7 | text | 5 | Step 1000, Minibatch Loss= 1.6365, Training Accuracy= 0.477 |

| 7 | text | 6 | Step 1200, Minibatch Loss= 1.6691, Training Accuracy= 0.516 |

| 7 | text | 7 | Step 1400, Minibatch Loss= 1.4626, Training Accuracy= 0.547 |

| 7 | text | 8 | Step 1600, Minibatch Loss= 1.4707, Training Accuracy= 0.539 |

| 7 | text | 9 | Step 1800, Minibatch Loss= 1.4087, Training Accuracy= 0.570 |

| 7 | text | 10 | Step 2000, Minibatch Loss= 1.3033, Training Accuracy= 0.570 |

| 7 | text | 11 | Step 2200, Minibatch Loss= 1.3773, Training Accuracy= 0.508 |

| 7 | text | 12 | Step 2400, Minibatch Loss= 1.3092, Training Accuracy= 0.570 |

| 7 | text | 13 | Step 2600, Minibatch Loss= 1.2272, Training Accuracy= 0.609 |

| 7 | text | 14 | Step 2800, Minibatch Loss= 1.1827, Training Accuracy= 0.633 |

| 7 | text | 15 | Step 3000, Minibatch Loss= 1.0453, Training Accuracy= 0.641 |

| 7 | text | 16 | Step 3200, Minibatch Loss= 1.0400, Training Accuracy= 0.648 |

| 7 | text | 17 | Step 3400, Minibatch Loss= 1.1145, Training Accuracy= 0.656 |

| 7 | text | 18 | Step 3600, Minibatch Loss= 0.9884, Training Accuracy= 0.688 |

| 7 | text | 19 | Step 3800, Minibatch Loss= 1.0395, Training Accuracy= 0.703 |

| 7 | text | 20 | Step 4000, Minibatch Loss= 1.0096, Training Accuracy= 0.664 |

| 7 | text | 21 | Step 4200, Minibatch Loss= 0.8806, Training Accuracy= 0.758 |

| 7 | text | 22 | Step 4400, Minibatch Loss= 0.9090, Training Accuracy= 0.766 |

| 7 | text | 23 | Step 4600, Minibatch Loss= 1.0060, Training Accuracy= 0.703 |

| 7 | text | 24 | Step 4800, Minibatch Loss= 0.8954, Training Accuracy= 0.703 |

| 7 | text | 25 | Step 5000, Minibatch Loss= 0.8163, Training Accuracy= 0.750 |

| 7 | text | 26 | Step 5200, Minibatch Loss= 0.7620, Training Accuracy= 0.773 |

| 7 | text | 27 | Step 5400, Minibatch Loss= 0.7388, Training Accuracy= 0.758 |

| 7 | text | 28 | Step 5600, Minibatch Loss= 0.7604, Training Accuracy= 0.695 |

| 7 | text | 29 | Step 5800, Minibatch Loss= 0.7459, Training Accuracy= 0.734 |

| 7 | text | 30 | Step 6000, Minibatch Loss= 0.7448, Training Accuracy= 0.734 |

| 7 | text | 31 | Step 6200, Minibatch Loss= 0.7208, Training Accuracy= 0.773 |

| 7 | text | 32 | Step 6400, Minibatch Loss= 0.6557, Training Accuracy= 0.773 |

| 7 | text | 33 | Step 6600, Minibatch Loss= 0.8616, Training Accuracy= 0.758 |

| 7 | text | 34 | Step 6800, Minibatch Loss= 0.6089, Training Accuracy= 0.773 |

| 7 | text | 35 | Step 7000, Minibatch Loss= 0.5020, Training Accuracy= 0.844 |

| 7 | text | 36 | Step 7200, Minibatch Loss= 0.5980, Training Accuracy= 0.812 |

| 7 | text | 37 | Step 7400, Minibatch Loss= 0.6786, Training Accuracy= 0.766 |

| 7 | text | 38 | Step 7600, Minibatch Loss= 0.4891, Training Accuracy= 0.859 |

| 7 | text | 39 | Step 7800, Minibatch Loss= 0.7042, Training Accuracy= 0.797 |

| 7 | text | 40 | Step 8000, Minibatch Loss= 0.4200, Training Accuracy= 0.859 |

| 7 | text | 41 | Step 8200, Minibatch Loss= 0.6442, Training Accuracy= 0.742 |

| 7 | text | 42 | Step 8400, Minibatch Loss= 0.5569, Training Accuracy= 0.828 |

| 7 | text | 43 | Step 8600, Minibatch Loss= 0.5838, Training Accuracy= 0.836 |

| 7 | text | 44 | Step 8800, Minibatch Loss= 0.5579, Training Accuracy= 0.812 |

| 7 | text | 45 | Step 9000, Minibatch Loss= 0.4337, Training Accuracy= 0.867 |

| 7 | text | 46 | Step 9200, Minibatch Loss= 0.4366, Training Accuracy= 0.844 |

| 7 | text | 47 | Step 9400, Minibatch Loss= 0.5051, Training Accuracy= 0.844 |

| 7 | text | 48 | Step 9600, Minibatch Loss= 0.5244, Training Accuracy= 0.805 |

| 7 | text | 49 | Step 9800, Minibatch Loss= 0.4932, Training Accuracy= 0.805 |

| 7 | text | 50 | Step 10000, Minibatch Loss= 0.4833, Training Accuracy= 0.852 |

| 7 | text | 51 | Optimization Finished! |

| 7 | text | 52 | Testing Accuracy: 0.882812 |

cells_outputs_kv

| cell_id | key | value |

|---|---|---|

| 2 | name | stdout |

| 2 | output_type | stream |

| 7 | name | stdout |

| 7 | output_type | stream |

cells_kv

| cell_id | key | value |

|---|---|---|

| 0 | cell_type | markdown |

| 0 | metadata | {'collapsed': True} |

| 1 | cell_type | markdown |

| 1 | metadata | |

| 2 | cell_type | code |

| 2 | execution_count | 1 |

| 2 | metadata | {'collapsed': False} |

| 3 | cell_type | code |

| 3 | execution_count | 2 |

| 3 | metadata | {'collapsed': False} |

| 4 | cell_type | code |

| 4 | execution_count | 3 |

| 4 | metadata | {'collapsed': True} |

| 5 | cell_type | code |

| 5 | execution_count | 4 |

| 5 | metadata | {'collapsed': False} |

| 6 | cell_type | code |

| 6 | execution_count | 5 |

| 6 | metadata | {'collapsed': True} |

| 7 | cell_type | code |

| 7 | execution_count | 6 |

| 7 | metadata | {'collapsed': False} |

| 8 | cell_type | code |

| 8 | execution_count | |

| 8 | metadata | {'collapsed': True} |

metadata_kernelspec

| key | value |

|---|---|

| display_name | Python [default] |

| language | python |

| name | python2 |

metadata_language_info

| key | value |

|---|---|

| codemirror_mode_name | ipython |

| codemirror_mode_version | 2 |

| file_extension | .py |

| mimetype | text/x-python |

| name | python |

| nbconvert_exporter | python |

| pygments_lexer | ipython2 |

| version | 2.7.12 |

metadata_kv

kv

| key | value |

|---|---|

| nbformat | 4 |

| nbformat_minor | 0 |

Any feedback or comments would be appreciated.

from sqlitebiter.

Thank you for your request.

That's an interesting feature. I will consider the feature for the future release.

from sqlitebiter.

@thombashi oh, great - looks interesting; I'll try to have a play in next day or two and get comments back to you.

Thanks,

--tony

from sqlitebiter.

One of the things I'd like to be able to do is build a simple search tool around notebooks. It's great that if I can extract content from multiple notebooks into the same tables, but it would also be useful if there was another table that listed notebook_id and the notebook filepath/filename (or maybe, directory path and filename as separate columns) and then used notebook_id e.g. as part of a compound primary key in the other tables. Then I could run queries on the content of a single notebook, or pull back the name of a notebook that contained a particular search term.

As an example, I note I can get all the content back from a single cell as a string separated by line breaks using the a query of the form:

SELECT cell_id, GROUP_CONCAT(text, '\n')

FROM (

SELECT cell_id, text

FROM cells_source

ORDER BY cell_id, line_no

)

GROUP BY cell_id;

It would be nice if I could also do something like:

SELECT notebook, cell_id, GROUP_CONCAT(text, '\n')

FROM (

SELECT notebook_filename AS notebook, cell_id, text

FROM cells_source, notebooks

WHERE notebooks.notebook_id = cells_source.notebook_id

ORDER BY cell_id, line_no

)

GROUP BY cell_id;

from sqlitebiter.

Thank you for your feedback.

Nootebook ids (and the notebook filepath/filename) are surely useful.

I will consider that for the future release (probably the next release).

from sqlitebiter.

another table that listed notebook_id and the notebook filepath/filename (or maybe, directory path and filename as separate columns)

I had added the above as _source_infor_ table.

Since sqlitebiter 0.19.0, the output SQLite database scheme is as follows when you convert IPython Notebooks (you can specify multiple notebooks)

CREATE TABLE IF NOT EXISTS '_source_info_' (source_id INTEGER NOT NULL, dir_name TEXT, base_name TEXT NOT NULL, format_name TEXT NOT NULL, dst_table TEXT NOT NULL, size INTEGER, mtime INTEGER);

CREATE TABLE IF NOT EXISTS 'cells_source' (source_id INTEGER NOT NULL, cell_id INTEGER NOT NULL, line_no INTEGER NOT NULL, text TEXT);

CREATE TABLE IF NOT EXISTS 'cells_kv' (source_id INTEGER NOT NULL, cell_id INTEGER NOT NULL, key TEXT NOT NULL, value TEXT);

CREATE TABLE IF NOT EXISTS 'cells_outputs' (source_id INTEGER NOT NULL, cell_id INTEGER NOT NULL, type TEXT NOT NULL, line_no INTEGER NOT NULL, data BLOB);

CREATE TABLE IF NOT EXISTS 'cells_outputs_kv' (source_id INTEGER NOT NULL, cell_id INTEGER NOT NULL, key TEXT NOT NULL, value TEXT);

CREATE TABLE IF NOT EXISTS 'metadata_kernelspec' (source_id INTEGER NOT NULL, key TEXT NOT NULL, value TEXT NOT NULL);

CREATE TABLE IF NOT EXISTS 'metadata_language_info' (source_id INTEGER NOT NULL, key TEXT NOT NULL, value TEXT NOT NULL);

CREATE TABLE IF NOT EXISTS 'kv' (source_id INTEGER NOT NULL, key TEXT NOT NULL, value TEXT);Some of the attributes names differ from your statements (to also use for other file formats other than IPython Notebook):

notebook_id->source_id- filepath/filename ->

dir_name,base_name

Now, you can execute example queries that you provided in the previous post as the followings:

SELECT cell_id, GROUP_CONCAT(text, '\n')

FROM (

SELECT cell_id, text

FROM cells_source

ORDER BY cell_id, line_no

)

GROUP BY cell_id;SELECT notebook, cell_id, GROUP_CONCAT(text, '\n')

FROM (

SELECT base_name AS notebook, cell_id, text

FROM cells_source, _source_info_

WHERE _source_info_.source_id = cells_source.source_id

ORDER BY cell_id, line_no

)

GROUP BY cell_id;from sqlitebiter.

I'll close the issue.

Feel free to reopen if you still have any problems about the issue.

from sqlitebiter.

Related Issues (20)

- update $archive value to "sqlitebiter_windows_amd64.zip" in get-sqlitebiter.ps1 HOT 1

- How do I specify columns orders when loading form an array of JSON objects? HOT 3

- Handle Wikipedia table faulty boolean design; and HTML headers with row/colspan > 1 (also XLS) HOT 1

- XLS import FAILs when a header cell is blank

- XLSX conversion crashes with NoneType for authorId, seems related to cell comments

- json to sqlLite Error HOT 1

- ODS Support

- Convert excel DATE to sqlite DATE

- Unable to locate package HOT 1

- Retain leading zeros when converting text or --no-type-inference HOT 1

- Support simple hierarchical JSON HOT 2

- Automatically detect and create Primary and Foreign Keys HOT 2

- Better default name for database file

- Non able to create output, starting from a simple html page HOT 4

- incompatibility between pip V22 json output and sqlitebiter HOT 1

- Installation dpkg (.deb package) Failing HOT 1

- Convert to spaces HOT 2

- Add --unescape option HOT 1

- Allow inferring source format from alternate string HOT 6

- sqlite3.IntegrityError: NOT NULL constraint failed: _source_info_.format_name HOT 2

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from sqlitebiter.