Comments (15)

Of course that you need to test for every server and database.

But the same server, don't need to test json and plaintext for each database.

We only need to test the database tests.

The json and plaintext will be the same with any database, for that we don't need to repeat.

The passenger mri, will have the same results in json and plaintext with any database.

So we only need to test it one time for every server, and not with any database too.

from frameworkbenchmarks.

@joanhey , do I need to remove only test falcon [meinheld-orjson] ?

All other tests have different http servers.

from frameworkbenchmarks.

@joanhey , PyPy variants (flask-raw & flask) already marked as broken : f8e8239#diff-bf7a33c9cd47aa475c2802732158ab4d7009ed11dafefea8a6114204558925d3R112

from frameworkbenchmarks.

@remittor orjson looks like a different json lib, but the plaintext and fortunes don't use json.

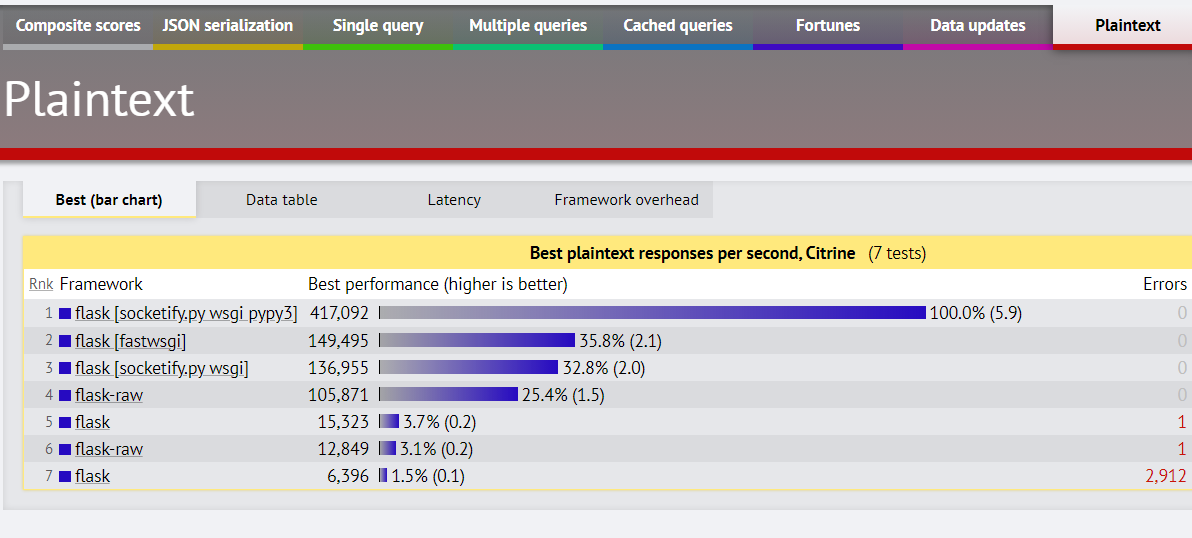

About flask in plaintext and also json:

from frameworkbenchmarks.

I don't know the difference between Sinatra and Sinatra Sequel.

But in the plaintext test, the results are identical.

EDIT:

Sequel is only an ORM, so we can remove all json and plaintext tests.

b2b1b91

from frameworkbenchmarks.

The difference between Sinatra and Sinatra Sequel is the ORM. Sinatra uses ActiveRecord and Sinatra Sequel uses sequel.

Of course neither the JSON nor the plaintext tests touch the database so it doesn't matter in those cases.

One issue with the ruby benchmarks are that they all test mysql and postges and three different servers (puma, unicorn, passenger). Every combination is a different performance profile but I think the puma-postgres combination is likely to be fastest.

Also the ruby tests are quite old (except for rails). I just did a pull request for Roda to update it and will also update the other ones soon. This should make some difference in the performance of the ruby benchmarks.

from frameworkbenchmarks.

@timuckun remember to delete the extra tests in Roda

Thank you

from frameworkbenchmarks.

Which are the extra tests? Do you mean the MySQL tests or the the other servers, or the plaintext/json tests?

It seems to me there is some value in knowing which of the various ruby servers performs best under these loads.

Perhaps a good idea is to test the servers in the currently broken rack tests and then use the fastest one in the rest of the ruby tests. Rack is the underlying library in virtually all the ruby frameworks so that would establish a good baseline for testing various threading/fiber/forking/async tests.

from frameworkbenchmarks.

Indeed, the web-server chosen will respond differently against the latency of the application.

So, IMO, it s a good idea to the test all the possible combinations

Maybe, it should be imposed 1 web-server per language (gunicorn for Python for ex)

from frameworkbenchmarks.

If you test a Json lib, you don't need Plaintext and Fortunes, as don't use json.

For every server is OK to have all the tests.

But with the same server and different database, we don't need to repeat the Json and Plaintext.

from frameworkbenchmarks.

The way ruby works is that you use the same class for the functionality and use a different invocation based on the server.

For example

bundle exec passenger start ....

bundle exec unicorn ...

bundle exec puma ...All of these will all read the same config.ru file which will execute whatever class is defined in there. Each docker file is slightly different but the code is the same for all of the servers. The path of least resistance is the repeat all the tests for each server.

I am happy to get rid of the mysql tests but in the cast of ORMs it might be useful to see how the performance of the ORM and the client libs differ between the databases.

As mentioned above here is what I propose.

I would fix the broken ruby/rack tests and test all the servers in that project and then limit the other ruby tests to whichever server is the fastest. Perhaps the rack test could also test both databases using native libs. This would establish a baseline for all ruby based benchmarks as they all depend on rack. Once that baseline is established each framework would only test their own project with one server and one database using whatever ORM or library it comes bundled with. I don't think every server needs to be tested with both databases so I would use the most widely used server (puma) to test both databases the rest would only be tested with postgres.

from frameworkbenchmarks.

I understand what you are saying. I can remove it from the benchmarks.json for the additional tests.

from frameworkbenchmarks.

In the directory frameworks/JavaScript and frameworks/TypeScript there are often unnecessary test duplicates. For example, this applies to nodejs, fastify, etc.

By the way, not only json and plaintext are repeated. This also applies to tests involving databases. In tests with json and plaintext (where benchmark_config -> display_name does not mention any database), it does not make sense to involve the database.

from frameworkbenchmarks.

@KostyaTretyak

I don't see any repeated tests in nodejs, fastify, and nest except for chakra.

from frameworkbenchmarks.

@JHyeok, if you see a mix, for example, plaintext_url with db_url - these are duplicates. And there are indeed many, including nodejs, fastify, nest and so on...

It makes no sense to make such a mix, since the results should contain statistics plaintext with json or databases.

from frameworkbenchmarks.

Related Issues (20)

- Scratch docker permutations

- Python django workload failed after updating the latest version to 4.2.1 HOT 1

- Python 2 EOL in Github Actions. HOT 2

- How to submit your own framework HOT 1

- Using http pipelining HOT 3

- New Citrine Setup Shows Lower Numbers HOT 34

- No longer accepting plaintext only frameworks / Limited number of tests mutations HOT 12

- All frameworks based on Node.js must be tested on the same docker image. HOT 8

- Add "without keep alive" metric HOT 15

- Round 22 Completed HOT 28

- Node & Express Thread Configuration HOT 1

- Flask five times slower in Round 21 than Round 20 HOT 1

- ASP.NET Core platform Json and Plaintext HOT 4

- Round 22 results site shows "woo" test with Racket in the language column whereas it is written in Common Lisp

- New execution mode "profiling" HOT 6

- Enhancement request: disable pg_stat_statements when running anything but validation

- PHP 8.3 update [info]

- Inconsistent composite score best score computation HOT 2

- Holiday Break HOT 8

- Where to find the exact code that was used for Round22? HOT 1

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from frameworkbenchmarks.