Comments (14)

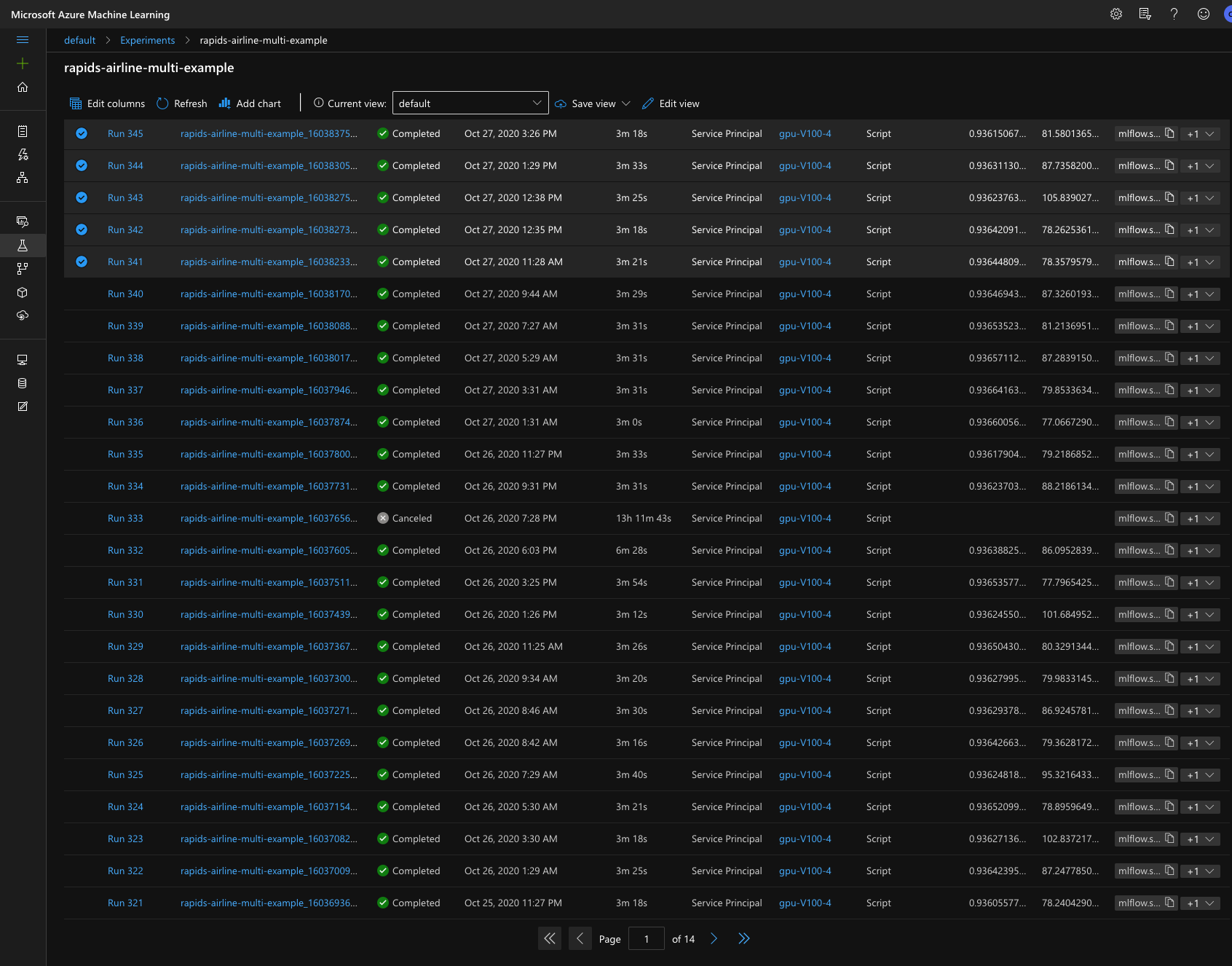

for full context run 333 is the second one which got stuck:

I'll have the AML engineering team check if this is something on our end

from azureml-examples.

logs:

2020/10/25 06:27:49 logger.go:297: Attempt 1 of http call to http://10.0.0.5:16384/sendlogstoartifacts/info

2020/10/25 06:27:49 logger.go:297: Attempt 1 of http call to http://10.0.0.5:16384/sendlogstoartifacts/status

[2020-10-25T06:27:51.919906] Entering context manager injector.

[context_manager_injector.py] Command line Options: Namespace(inject=['ProjectPythonPath:context_managers.ProjectPythonPath', 'Dataset:context_managers.Datasets', 'RunHistory:context_managers.RunHistory', 'TrackUserError:context_managers.TrackUserError', 'UserExceptions:context_managers.UserExceptions'], invocation=['train.py', '--data_dir', 'DatasetConsumptionConfig:input_c31b4162', '--n_bins', '32', '--compute', 'multi-GPU', '--cv-folds', '1'])

Initialize DatasetContextManager.

Starting the daemon thread to refresh tokens in background for process with pid = 241

Set Dataset input_c31b4162's target path to /tmp/tmp3686hv94

Enter __enter__ of DatasetContextManager

SDK version: azureml-core==1.15.0 azureml-dataprep==2.3.0. Session id: 4ccb97c4-cd0e-4a5d-9d43-ed922b9d6add. Run id: rapids-airline-multi-example_1603607238_498492de.

Processing 'input_c31b4162'.

Globalization is not supported. Running in culture invariant mode (DOTNET_SYSTEM_GLOBALIZATION_INVARIANT='true').

Could not refresh EngineServer credentials in rslex: No Lariat Runtime Environment is active, please initialize an Environment.

Could not refresh EngineServer credentials in rslex: No Lariat Runtime Environment is active, please initialize an Environment.

Processing dataset FileDataset

{

"source": [

"https://airlinedataset.blob.core.windows.net/airline-10years/*"

],

"definition": [

"GetFiles"

],

"registration": {

"id": "a13fb474-eb17-43e7-9432-da7a83bef4b3",

"name": null,

"version": null,

"workspace": "Workspace.create(name='default', subscription_id='6560575d-fa06-4e7d-95fb-f962e74efd7a', resource_group='azureml-examples')"

}

}

Mounting input_c31b4162 to /tmp/tmp3686hv94.

Globalization is not supported. Running in culture invariant mode (DOTNET_SYSTEM_GLOBALIZATION_INVARIANT='true').

Could not refresh EngineServer credentials in rslex: No Lariat Runtime Environment is active, please initialize an Environment.

Could not refresh EngineServer credentials in rslex: No Lariat Runtime Environment is active, please initialize an Environment.

Mounted input_c31b4162 to /tmp/tmp3686hv94 as folder.

Exit __enter__ of DatasetContextManager

Entering Run History Context Manager.

Current directory: /mnt/batch/tasks/shared/LS_root/jobs/default/azureml/rapids-airline-multi-example_1603607238_498492de/mounts/workspaceblobstore/azureml/rapids-airline-multi-example_1603607238_498492de

Preparing to call script [ train.py ] with arguments: ['--data_dir', '$input_c31b4162', '--n_bins', '32', '--compute', 'multi-GPU', '--cv-folds', '1']

After variable expansion, calling script [ train.py ] with arguments: ['--data_dir', '/tmp/tmp3686hv94', '--n_bins', '32', '--compute', 'multi-GPU', '--cv-folds', '1']

Script type = None

---->>>> cuDF version <<<<----

0.15.0

---->>>> cuML version <<<<----

0.15.0

> RapidsCloudML

Compute, Data , Model, Cloud types ('multi-GPU', 'Parquet', 'RandomForest', 'Azure')

Multi-GPU selected

Client information <Client: 'tcp://127.0.0.1:38541' processes=4 threads=4, memory=473.42 GB>

multi-GPU

> Loading dataset from /tmp/tmp3686hv94/part*.parquet

GPU read

Reading using dask_cudf

cudf/utilities/bit.hpp(19): warning: cassert: [jitify] File not found

cudf/fixed_point/fixed_point.hpp(27): warning: cassert: [jitify] File not found

Ingestion completed in 3.674437305999163

Dataset descriptors: (Delayed('int-ff4f2b39-d9fb-48b3-acaf-357777eedce1'), 15)

Flight_Number_Reporting_Airline float32

Year float32

Quarter float32

Month float32

DayOfWeek float32

DOT_ID_Reporting_Airline float32

OriginCityMarketID float32

DestCityMarketID float32

DepTime float32

DepDelay float32

DepDel15 float32

ArrDel15 int32

ArrDelay float32

AirTime float32

Distance float32

dtype: object

---->>>> Training using GPUs <<<<----

CV fold 0 of 1

> Splitting train and test data

X_train shape and type(Delayed('int-7849fd69-4caf-43e3-a71f-7d7111a2ce31'), 13) <class 'dask_cudf.core.DataFrame'>

Split completed in 0.004588507996231783

> Training RandomForest estimator w/ hyper-params

Fitting multi-GPU daskRF

/opt/conda/envs/rapids/lib/python3.7/site-packages/cuml/dask/ensemble/randomforestclassifier.py:158: UserWarning: For reproducible results in Random Forest Classifier or for almost reproducible results in Random Forest Regressor, n_streams==1 is recommended. If n_streams is > 1, results may vary due to stream/thread timing differences, even when random_seed is set

**kwargs

/opt/conda/envs/rapids/lib/python3.7/site-packages/cuml/dask/ensemble/randomforestclassifier.py:158: UserWarning: For reproducible results in Random Forest Classifier or for almost reproducible results in Random Forest Regressor, n_streams==1 is recommended. If n_streams is > 1, results may vary due to stream/thread timing differences, even when random_seed is set

**kwargs

/opt/conda/envs/rapids/lib/python3.7/site-packages/cuml/dask/ensemble/randomforestclassifier.py:158: UserWarning: For reproducible results in Random Forest Classifier or for almost reproducible results in Random Forest Regressor, n_streams==1 is recommended. If n_streams is > 1, results may vary due to stream/thread timing differences, even when random_seed is set

**kwargs

/opt/conda/envs/rapids/lib/python3.7/site-packages/cuml/dask/ensemble/randomforestclassifier.py:158: UserWarning: For reproducible results in Random Forest Classifier or for almost reproducible results in Random Forest Regressor, n_streams==1 is recommended. If n_streams is > 1, results may vary due to stream/thread timing differences, even when random_seed is set

**kwargsfrom azureml-examples.

rapids-airline-multi-example_1603607238_498492de

stuck for ~60 hours

2020/10/27 02:28:42 logger.go:297: Attempt 1 of http call to http://10.0.0.4:16384/sendlogstoartifacts/info

2020/10/27 02:28:42 logger.go:297: Attempt 1 of http call to http://10.0.0.4:16384/sendlogstoartifacts/status

[2020-10-27T02:28:44.486546] Entering context manager injector.

[context_manager_injector.py] Command line Options: Namespace(inject=['ProjectPythonPath:context_managers.ProjectPythonPath', 'Dataset:context_managers.Datasets', 'RunHistory:context_managers.RunHistory', 'TrackUserError:context_managers.TrackUserError', 'UserExceptions:context_managers.UserExceptions'], invocation=['train.py', '--data_dir', 'DatasetConsumptionConfig:input_fa2fd348', '--n_bins', '32', '--compute', 'multi-GPU', '--cv-folds', '1'])

Initialize DatasetContextManager.

Starting the daemon thread to refresh tokens in background for process with pid = 243

Set Dataset input_fa2fd348's target path to /tmp/tmp7azhoytw

Enter __enter__ of DatasetContextManager

SDK version: azureml-core==1.15.0 azureml-dataprep==2.3.0. Session id: f34101d7-fbb7-44e4-a93c-d5ea51f80c59. Run id: rapids-airline-multi-example_1603765696_115ff533.

Processing 'input_fa2fd348'.

Globalization is not supported. Running in culture invariant mode (DOTNET_SYSTEM_GLOBALIZATION_INVARIANT='true').

Could not refresh EngineServer credentials in rslex: No Lariat Runtime Environment is active, please initialize an Environment.

Could not refresh EngineServer credentials in rslex: No Lariat Runtime Environment is active, please initialize an Environment.

Processing dataset FileDataset

{

"source": [

"https://airlinedataset.blob.core.windows.net/airline-10years/*"

],

"definition": [

"GetFiles"

],

"registration": {

"id": "a13fb474-eb17-43e7-9432-da7a83bef4b3",

"name": null,

"version": null,

"workspace": "Workspace.create(name='default', subscription_id='6560575d-fa06-4e7d-95fb-f962e74efd7a', resource_group='azureml-examples')"

}

}

Mounting input_fa2fd348 to /tmp/tmp7azhoytw.

Globalization is not supported. Running in culture invariant mode (DOTNET_SYSTEM_GLOBALIZATION_INVARIANT='true').

Could not refresh EngineServer credentials in rslex: No Lariat Runtime Environment is active, please initialize an Environment.

Could not refresh EngineServer credentials in rslex: No Lariat Runtime Environment is active, please initialize an Environment.

Mounted input_fa2fd348 to /tmp/tmp7azhoytw as folder.

Exit __enter__ of DatasetContextManager

Entering Run History Context Manager.

Current directory: /mnt/batch/tasks/shared/LS_root/jobs/default/azureml/rapids-airline-multi-example_1603765696_115ff533/mounts/workspaceblobstore/azureml/rapids-airline-multi-example_1603765696_115ff533

Preparing to call script [ train.py ] with arguments: ['--data_dir', '$input_fa2fd348', '--n_bins', '32', '--compute', 'multi-GPU', '--cv-folds', '1']

After variable expansion, calling script [ train.py ] with arguments: ['--data_dir', '/tmp/tmp7azhoytw', '--n_bins', '32', '--compute', 'multi-GPU', '--cv-folds', '1']

Script type = None

---->>>> cuDF version <<<<----

0.15.0

---->>>> cuML version <<<<----

0.15.0

> RapidsCloudML

Compute, Data , Model, Cloud types ('multi-GPU', 'Parquet', 'RandomForest', 'Azure')

Multi-GPU selected

Client information <Client: 'tcp://127.0.0.1:43869' processes=4 threads=4, memory=473.42 GB>

multi-GPU

> Loading dataset from /tmp/tmp7azhoytw/part*.parquet

GPU read

Reading using dask_cudf

cudf/utilities/bit.hpp(19): warning: cassert: [jitify] File not found

cudf/fixed_point/fixed_point.hpp(27): warning: cassert: [jitify] File not found

Ingestion completed in 3.5310610010001255

Dataset descriptors: (Delayed('int-42f22444-d0ca-4fca-b470-b47043e92d23'), 15)

Flight_Number_Reporting_Airline float32

Year float32

Quarter float32

Month float32

DayOfWeek float32

DOT_ID_Reporting_Airline float32

OriginCityMarketID float32

DestCityMarketID float32

DepTime float32

DepDelay float32

DepDel15 float32

ArrDel15 int32

ArrDelay float32

AirTime float32

Distance float32

dtype: object

---->>>> Training using GPUs <<<<----

CV fold 0 of 1

> Splitting train and test data

X_train shape and type(Delayed('int-1482a934-84c1-485c-91eb-4c582ac99ab3'), 13) <class 'dask_cudf.core.DataFrame'>

Split completed in 0.00498011600029713

> Training RandomForest estimator w/ hyper-params

Fitting multi-GPU daskRF

/opt/conda/envs/rapids/lib/python3.7/site-packages/cuml/dask/ensemble/randomforestclassifier.py:158: UserWarning: For reproducible results in Random Forest Classifier or for almost reproducible results in Random Forest Regressor, n_streams==1 is recommended. If n_streams is > 1, results may vary due to stream/thread timing differences, even when random_seed is set

**kwargs

/opt/conda/envs/rapids/lib/python3.7/site-packages/cuml/dask/ensemble/randomforestclassifier.py:158: UserWarning: For reproducible results in Random Forest Classifier or for almost reproducible results in Random Forest Regressor, n_streams==1 is recommended. If n_streams is > 1, results may vary due to stream/thread timing differences, even when random_seed is set

**kwargs

/opt/conda/envs/rapids/lib/python3.7/site-packages/cuml/dask/ensemble/randomforestclassifier.py:158: UserWarning: For reproducible results in Random Forest Classifier or for almost reproducible results in Random Forest Regressor, n_streams==1 is recommended. If n_streams is > 1, results may vary due to stream/thread timing differences, even when random_seed is set

**kwargsrapids-airline-multi-example_1603765696_115ff533

stuck for ~14 hours

from azureml-examples.

same thing as before, the compute cluster's max nodes is 2. 2 runs got stuck for 13+ hours, causing subsequent runs to hit 6 hour GHA timeout

@zronaghi fyi - is it possible the run is legitimately getting stuck fitting the RF? it seems to consistently get stuck there from these logs and what I remember about last time. would there be an easy way to avoid this if so?

from azureml-examples.

it is green again but leaving this issue open until the cause is fixed

from azureml-examples.

Is it green again meaning only some of the runs fail? I haven't seen this issue in my environment but will re-run with these modified scripts/notebooks to investigate. I'll update to 0.16 RAPIDS as well since it was released last week.

from azureml-examples.

it seems a low percent (maybe <5%?) of runs will get stuck - here is what it looks like in UI:

I manually cancelled run 309 after 57 hours of running. 2 runs need to get stuck at the same time for the next runs to stayed queued indefinitely, causing the github action to fail after 6 hours. This seems likely enough to happen every couple weeks or so at the current testing rate. After manually cancelling the two stuck runs, it recovers quickly

the repo has changed a bit since you first added these notebooks. please check the contributing guidelines, develop on a branch, and let us know if you have any issues - thanks for the contributions!

from azureml-examples.

I just completed multi-GPU HPO runs (100 jobs) and wasn't able to reproduce the issue. Submitted a PR with RAPIDS 0.16 container and added max_run_duration_seconds to 30 minutes for multi-GPU training, would this be helpful in case of failure? It would also be good to know if any of these tests fail with the latest container or if you've seen similar issues with other Dask tutorials

from azureml-examples.

renaming the issue - the timeout will solve this, will keep the issue open for AML to investigate. i know users have complained about runs getting stuck

from azureml-examples.

MSFT internal ICM for investigation: https://portal.microsofticm.com/imp/v3/incidents/details/212111206/home

from azureml-examples.

from the investigation:

All good runs use the following versions of azureml-core, azureml-dataprep and cuda

>>SDK version: azureml-core==1.17.0 azureml-dataprep==2.4.2.

---->>>> cuDF version <<<<----

0.16.0a+1979.g2cda39b341

---->>>> cuML version <<<<----

0.16.0a+882.g5851f4140

The run stuck over 50 hours had:

SDK version: azureml-core==1.15.0 azureml-dataprep==2.3.0.

---->>>> cuDF version <<<<----

0.15.0

---->>>> cuML version <<<<----

0.15.0

from azureml-examples.

Thanks for the update, so recent runs with RAPIDS 0.16 container haven't failed?

from azureml-examples.

this one failed or timed out: https://github.com/Azure/azureml-examples/actions/runs/348928004

from azureml-examples.

closing in favor of #284 - needs further investigation why runs are getting stuck, but this does not seem particular to rapids

from azureml-examples.

Related Issues (20)

- Machine Learning model for attack pattern on network logs

- Azureml job for vector index.

- Error: ScriptExecution.WriteStreams.Authentication when running Orange juice sales prediction parallel job HOT 1

- Argument issue

- Langchain Azure Mistral example doesn't run — AttributeError: 'ChatMessage' object has no attribute 'model_dump' HOT 2

- LiteLLM Example in mistral docs wrong HOT 14

- entry_relative_path issues when specifying the directory of a script.

- Reading delta table using Dataset type File(Machine learning studio)

- How to set 'auto_increment_version' to be True so that I don't have to udpate version manually for ever code update?

- /bin/bash: bad substitution

- Loading pre-built local Faiss Index causes ValueError (allow_dangerous_deserialization)

- "ManagedIdentityCredential: Unexpected content type "text/html"" error during AzureMachineLearningFileSystem function call HOT 1

- Langchain mistral notebook raising KeyError: 'choices' HOT 1

- An example on the "sdk-review" branch includes invalid function calls outdated for the recent SDK version

- azure ml studio not showing "view profile" button in pipeline page

- Azure_langchain_mistral_ai JSONDecodeError HOT 2

- Azure ML Monitoring - Bring your own production data - endpoint_deployment_id needed? HOT 3

- AzureML Custom Preprocessing Component as SDK variant HOT 4

- Azure Machine Learning Studio bug when configure a compute instance

- MLTable - AzureML - Cache Environment variables HOT 1

Recommend Projects

-

React

React

A declarative, efficient, and flexible JavaScript library for building user interfaces.

-

Vue.js

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

-

Typescript

Typescript

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

-

TensorFlow

An Open Source Machine Learning Framework for Everyone

-

Django

The Web framework for perfectionists with deadlines.

-

Laravel

A PHP framework for web artisans

-

D3

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

-

Recommend Topics

-

javascript

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

-

web

Some thing interesting about web. New door for the world.

-

server

A server is a program made to process requests and deliver data to clients.

-

Machine learning

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

-

Visualization

Some thing interesting about visualization, use data art

-

Game

Some thing interesting about game, make everyone happy.

Recommend Org

-

Facebook

We are working to build community through open source technology. NB: members must have two-factor auth.

-

Microsoft

Open source projects and samples from Microsoft.

-

Google

Google ❤️ Open Source for everyone.

-

Alibaba

Alibaba Open Source for everyone

-

D3

Data-Driven Documents codes.

-

Tencent

China tencent open source team.

from azureml-examples.